前些天发现了一个巨牛的人工智能学习网站,通俗易懂,风趣幽默,忍不住给大家分享一下。点击跳转到网站:https://www.captainai.net/dongkelun

前言

本文解决如标题所述的一个hive查询异常,详细异常信息为:1

Failed with exception java.io.IOException:org.apache.parquet.io.ParquetDecodingException: Can not read value at 1 in block 0 in file hdfs://192.168.44.128:8888/user/hive/warehouse/test.db/test/part-00000-9596e4bd-f511-4f76-9030-33e426d0369c-c000.snappy.parquet

这个异常是用spark sql将oracle(不知道mysql中有没有该问题,大家可以自己测试一下)中表数据查询出来然后写入hive表中,之后在hive命令行执行查询语句时产生的,下面先具体看一下如何产生这个异常的。

1、建立相关的库和表

1.1 建立hive测试库

在hive里执行如下语句1

create database test;

1.2 建立oracle测试表

1 | CREATE TABLE TEST |

1.3 在oracle表里插入一条记录

1 | INSERT INTO TEST (ID, NUM) VALUES('1', 1); |

2、spark sql代码

执行如下代码,便可以将之前在oracle里建的test的表导入到hive里了,其中hive的表会自动创建,具体的spark连接hive,连接关系型数据库,可以参考我的其他两篇博客:spark连接hive(spark-shell和eclipse两种方式) 、Spark Sql 连接mysql1

2

3

4

5

6

7

8

9

10

11

12

13

14

15

16

17

18

19

20

21

22

23

24

25

26

27

28

29

30

31

32

33

34

35

36

37

38

39package com.dkl.leanring.spark.sql

import org.apache.spark.sql.SparkSession

import org.apache.spark.sql.SaveMode

object Oracle2HiveTest {

def main(args: Array[String]): Unit = {

//初始化spark

val spark = SparkSession

.builder()

.appName("Oracle2HiveTest")

.master("local")

// .config("spark.sql.parquet.writeLegacyFormat", true)

.enableHiveSupport()

.getOrCreate()

//表名为我们新建的测试表

val tableName = "test"

//spark连接oracle数据库

val df = spark.read

.format("jdbc")

.option("url", "jdbc:oracle:thin:@192.168.44.128:1521:orcl")

.option("dbtable", tableName)

.option("user", "bigdata")

.option("password", "bigdata")

.option("driver", "oracle.jdbc.driver.OracleDriver")

.load()

//导入spark的sql函数,用起来较方便

import spark.sql

//切换到test数据库

sql("use test")

//将df中的数据保存到hive表中(自动建表)

df.write.mode(SaveMode.Overwrite).saveAsTable(tableName)

//停止spark

spark.stop

}

}

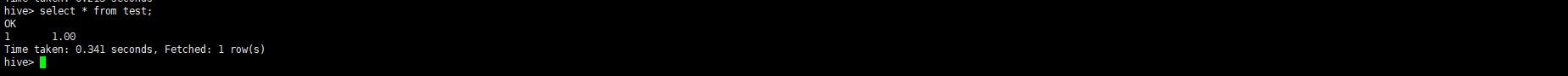

3、在hive里查询

1 | hive |

1 | use test; |

这时就可以出现如标题所述的异常了,附图:

4、解决办法

将2里面spark代码中的.config(“spark.sql.parquet.writeLegacyFormat”, true)注释去掉,再执行一次,即可解决该异常,该配置的默认值为false,如果设置为true,Spark将使用与Hive相同的约定来编写Parquet数据。

5、异常原因

出现该异常的根本原因是由于Hive和Spark中使用的不同的parquet约定引起的,参考https://stackoverflow.com/questions/37829334/parquet-io-parquetdecodingexception-can-not-read-value-at-0-in-block-1-in-file中的最后一个回答(加载可能比较慢),由于博主英文水平不是那么的好,所以附上英文吧~1

2

3

4

5

6

7Root Cause:

This issue is caused because of different parquet conventions used in Hive and Spark. In Hive, the decimal datatype is represented as fixed bytes (INT 32). In Spark 1.4 or later the default convention is to use the Standard Parquet representation for decimal data type. As per the Standard Parquet representation based on the precision of the column datatype, the underlying representation changes.

eg: DECIMAL can be used to annotate the following types: int32: for 1 <= precision <= 9 int64: for 1 <= precision <= 18; precision < 10 will produce a warning

Hence this issue happens only with the usage of datatypes which have different representations in the different Parquet conventions. If the datatype is DECIMAL (10,3), both the conventions represent it as INT32, hence we won't face an issue. If you are not aware of the internal representation of the datatypes it is safe to use the same convention used for writing while reading. With Hive, you do not have the flexibility to choose the Parquet convention. But with Spark, you do.

Solution: The convention used by Spark to write Parquet data is configurable. This is determined by the property spark.sql.parquet.writeLegacyFormat The default value is false. If set to "true", Spark will use the same convention as Hive for writing the Parquet data. This will help to solve the issue.

6、注意

1.2中的建表语句中NUMBER(10,2)的精度(10,2)必须要写,如果改为NUMBER就不会出现该异常,至于其他精度会不会出现该问题,大家可自行测试。